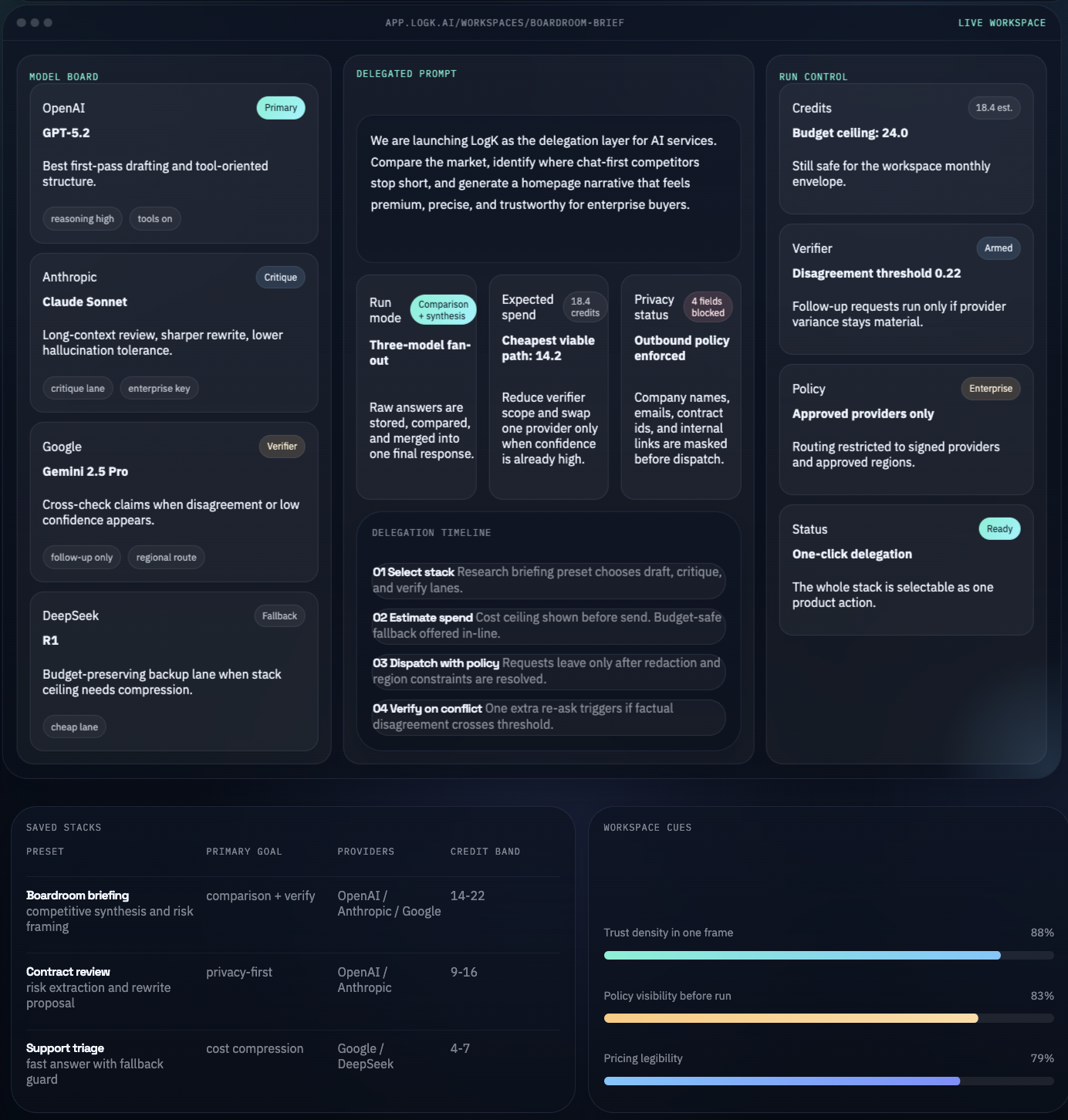

Select multiple models from one dashboard

Users can choose one model or several model cards at once, depending on whether they want a single answer, side-by-side comparison, or aggregated synthesis.

- Model cards for provider, speed, quality, and modality

- One click to compare multiple answers

- Recommendation layer for the current task